The Science Behind Psychometric Scoring: Validity, Bias, and AI Ethics

Building trust in next-gen credit assessment tools

At its core, psychometric scoring is about understanding human behavior—and using that understanding to make informed lending decisions.

Unlike traditional credit scoring, which looks at past financial data such as payment history or credit utilization, psychometric scoring draws on behavioral data. This could include personality traits, cognitive style, decision-making patterns, or even how someone navigates an app.

For example, a psychometric test might ask borrowers to complete a series of timed puzzles, rank their agreement with various statements, or choose between hypothetical financial scenarios. From their responses, a scoring algorithm generates a profile. This profile aims to indicate the likelihood that the person will repay a loan.

These tools are especially valuable in contexts where traditional data is missing. Think gig economy workers, first-time borrowers, rural populations, or people operating outside the banking system. Psychometric scoring can give these individuals a fair shot—if, and only if, the systems are designed with scientific rigor and ethical foresight.

Why Fintechs Are Paying Attention

For fintech companies, psychometric scoring presents an opportunity to:

-

Expand into untapped markets: Millions of creditworthy individuals lack a credit score. Psychometric tools can help identify them.

-

Streamline onboarding: Digital-first assessments can reduce friction and improve approval rates for new borrowers.

-

Strengthen risk models: When used alongside financial and behavioral data, psychometric scoring adds another predictive layer to decision-making.

But there’s also a risk. If these models are poorly designed or implemented without proper oversight, they can alienate users, introduce bias, or fall foul of regulators. The key is to approach psychometric scoring as a scientific discipline—not just a clever feature.

Validity: Can We Trust the Score?

The first and most important question is whether psychometric scores actually work.

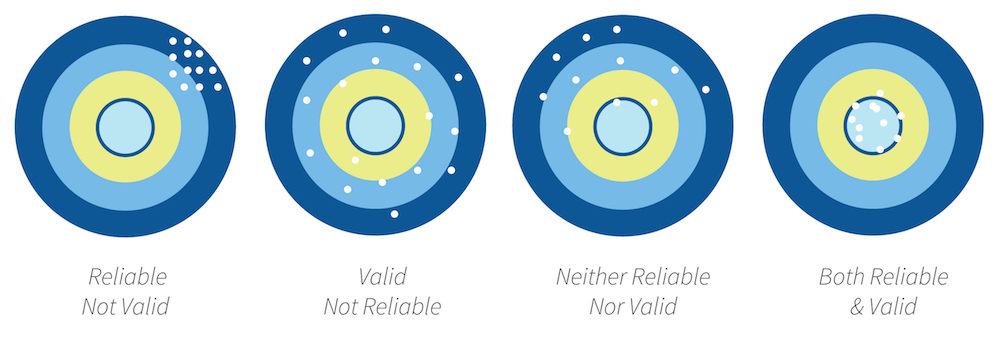

In the world of psychometrics, “validity” refers to whether an assessment measures what it claims to measure—and whether the resulting score can reliably predict a given outcome. For fintech applications, that outcome is typically repayment behavior.

Let’s break down the types of validity you should be looking for in any psychometric scoring solution:

1. Construct Validity

Construct validity asks whether the test is truly measuring the traits it intends to measure.

If a test claims to assess conscientiousness, is it really tapping into behaviors like reliability, persistence, and attention to detail? Or is it conflating those with unrelated traits, like social conformity or anxiety?

Building strong construct validity requires grounding assessments in psychological theory. It also means using multiple question formats to avoid overly simplistic or gamified tests that lack nuance.

2. Criterion Validity

This refers to how well a score correlates with real-world behavior—in this case, loan repayment.

Lenders need hard data to back up the scoring model. Ideally, this includes:

-

Historical data linking psychometric scores to repayment outcomes

-

Comparative studies showing predictive performance against traditional credit scores

-

Region-specific results that demonstrate effectiveness across borrower populations

A well-designed psychometric model should show a statistically significant link between score bands and repayment risk. Without that, you’re flying blind.

3. External Validity

External validity is about how well a model generalizes across populations. A psychometric tool built and validated in Brazil may not perform the same way in India or Kenya unless it has been adapted and tested in those contexts.

Cultural norms, language subtleties, and even smartphone literacy can impact how borrowers interpret questions. That’s why localization—and localized validation—is essential.

4. Ongoing Validation

Scoring models are not set-it-and-forget-it tools. They need to evolve with user behavior, economic conditions, and lending environments. Fintechs should work with scoring providers who commit to continuous improvement, A/B testing, and model retraining.

If you’re using psychometric scores in your credit decisions, make sure you’re also collecting feedback data to assess how well the scores perform over time.

The Bias Problem: Who Gets Left Out?

No system is neutral by default. Even the most well-intentioned scoring tool can introduce bias—if not in its inputs, then in how those inputs are interpreted.

Let’s look at a few ways bias can creep into psychometric scoring.

Biased Test Design

Some psychometric tests are developed using frameworks or references that are culturally Western or gendered. The wording of a question, the design of a scenario, or even the response options can unintentionally favor certain groups over others.

For instance, a risk-aversion question framed around stock trading may make less sense to someone whose experience with risk comes from informal business or subsistence farming.

This kind of design flaw can lead to distorted scores for people who don’t relate to the underlying assumptions in the test.

Biased Training Data

Many psychometric models use machine learning to optimize scoring. That’s not inherently bad—until the training data reflects biased or incomplete information.

If historical lending patterns show higher default rates among low-income borrowers, an AI trained on that data might learn to downgrade applicants from certain socioeconomic backgrounds, regardless of their actual behavior.

The challenge is that machine learning models are only as fair as the data they’re trained on. Without active measures to address bias, they can perpetuate the very exclusion they’re meant to solve.

Hidden Proxies for Demographics

Even when demographic data like age or gender is excluded, other variables in the model may act as proxies. For example, response times, word choices, or game performance might correlate with education level or access to digital devices—factors that themselves reflect deeper structural inequalities.

This can lead to a situation where people are unfairly penalized, not because they are untrustworthy borrowers, but because they belong to a marginalized group.

How to Audit for Bias

Fintechs have a responsibility to ask hard questions of their psychometric providers—or themselves, if building tools in-house. Here are some practices that can help mitigate bias:

-

Perform subgroup analysis: Measure how score distributions vary across gender, age, ethnicity, language, and income groups. Are certain groups disproportionately rejected?

-

Run differential validity tests: See whether scores are equally predictive of repayment across different populations.

-

Conduct fairness audits: Use statistical tests to detect disparate impact. These include metrics like equal opportunity difference, demographic parity, and predictive parity.

-

Involve diverse voices: Work with local partners, behavioral experts, and community advocates to review the content and structure of assessments.

-

Iterate and localize: Adapt assessments for different cultural contexts. Test early and often. Don’t assume that a “universal” test exists.

Ultimately, reducing bias isn’t about perfection—it’s about transparency, humility, and continuous improvement.

The Ethics of AI-Driven Scoring

As psychometric tools become more sophisticated, many are turning to artificial intelligence to refine models. AI can help identify patterns in user responses, optimize questions, and personalize scoring in real time.

But with that power comes risk.

Fintechs must recognize that credit scoring—especially when powered by AI—is a high-stakes, high-scrutiny use case. It determines who gets access to capital, who is excluded, and on what terms.

Here are key ethical concerns to keep in mind:

Transparency

Users should understand how they are being evaluated. This doesn’t mean disclosing proprietary algorithms, but it does mean providing:

-

Clear explanations of what traits are being assessed

-

Simple breakdowns of what a score means

-

Access to recourse or re-evaluation in case of disputes

Transparency builds trust, especially when borrowers don’t have a financial history to lean on.

Consent and Data Use

Behavioral data is personal. People have a right to know how their data will be used, how long it will be stored, and whether it will be shared with third parties.

Fintechs must obtain informed, opt-in consent—and not bury it in legalese. Data use policies should be written in plain language and accessible at every stage of the user journey.

Human Oversight

AI systems, no matter how smart, make mistakes. That’s why human oversight is critical. Credit decisions should never be fully automated without a way for real people to intervene, especially when scores are borderline or disputed.

Some regions, like the European Union, are already moving toward strict requirements for human-in-the-loop decision-making in credit scoring. Fintechs should anticipate this and prepare accordingly.

Accountability

Finally, ethical AI means being accountable. That means conducting regular audits, publishing results, and responding publicly to concerns. It also means recognizing when to pull a system offline if it’s causing harm.

The trust of your users is worth far more than the convenience of automation.

Building Trust from the Ground Up

Ultimately, psychometric scoring is about people.

It’s about giving someone a chance when they don’t have the paperwork to prove their worth. It’s about meeting borrowers where they are, understanding their context, and using science—not assumptions—to make lending decisions.

For fintechs, this is both an opportunity and a responsibility. You have the chance to lead the way in ethical innovation, expanding financial access without compromising on fairness or transparency.

Here’s how to get started:

-

Vet your partners carefully. Don’t just adopt a scoring tool because it’s trendy. Ask for validation studies, bias audits, and country-specific performance data.

-

Pilot before scaling. Test psychometric tools in small, controlled environments. Use real repayment outcomes to evaluate effectiveness.

-

Layer your data. Psychometric scoring works best when combined with other signals—transactional data, alternative credit data, and contextual information.

-

Listen to users. Gather feedback not just from borrowers who were approved, but also from those who were declined. What was their experience? Did they feel heard?

-

Invest in responsible AI practices. Build or partner with teams who understand both the technical and ethical dimensions of AI.

Psychometric scoring is not a silver bullet—but it is a powerful tool. When grounded in science and guided by ethics, it can help fintech companies do what they do best: unlock opportunity, reduce friction, and build meaningful relationships with customers.

As competition intensifies and regulators become more watchful, the fintechs that thrive will be the ones who don’t just ask what works—but also, what’s right.

By understanding the science behind psychometric scoring—its strengths, its limits, and its responsibilities—you’re already taking the first step toward building a more inclusive and trustworthy financial ecosystem.